Enhance your career, get your certificate as a Data Streaming Engineer | Get your Certificate

Hands On: Getting Started with Kafka Connect

Danica Fine

Senior Developer Advocate (Presenter)

Hands On: Getting Started with Kafka Connect

In this exercise, you will create a new topic to hold order event data, and set up a Kafka Connect data generator to populate the topic with sample data.

Confluent Cloud offers dozens of pre-built, fully managed connectors. Use the Amazon CloudWatch Logs or Oracle Database source connectors (among many others!) to stream data into Apache Kafka® or choose from a number of sink connectors to help you move your data into a variety of systems, including BigQuery and Amazon S3. Leveraging these managed connectors is the easiest way to use Kafka Connect to build fully managed data pipelines.

Confluent Cloud

For this course, you will use Confluent Cloud to provide a managed Kafka service, connectors, and stream processing.

- Go to Confluent Cloud and create a Confluent Cloud account if you don’t already have one. Otherwise, be sure to log in.

- Create a new cluster in Confluent Cloud. For the purposes of this exercise, we’ll be using all of the default configurations for our cluster and choose the Standard cluster. Name the cluster kc-101.

- When you’re finished with this exercise, don’t forget to delete your connector and topic in order to avoid exhausting your free usage. You can keep the kc-101 cluster since we will be using it in other exercises for this course.

FREE ACCESS: Basic clusters used in the context of this exercise won't incur much cost, and the amount of free usage that you receive along with the promo code 101CONNECT for $25 of free Confluent Cloud usage will be more than enough to cover it. You can also use the promo code CONFLUENTDEV1 to delay entering a credit card for 30 days.

Create a New Topic

-

From the Topics page of your Confluent Cloud cluster, click on Add topic.

-

Name the topic orders and ensure that the Number of partitions is set to 6.

- Click on Create with defaults.

Create a Data Generator with Kafka Connect

In reality, our Kafka topic would probably be populated from an application using the producer API to write messages to it. Here, we’re going to use a data generator that’s available as a connector for Kafka Connect.

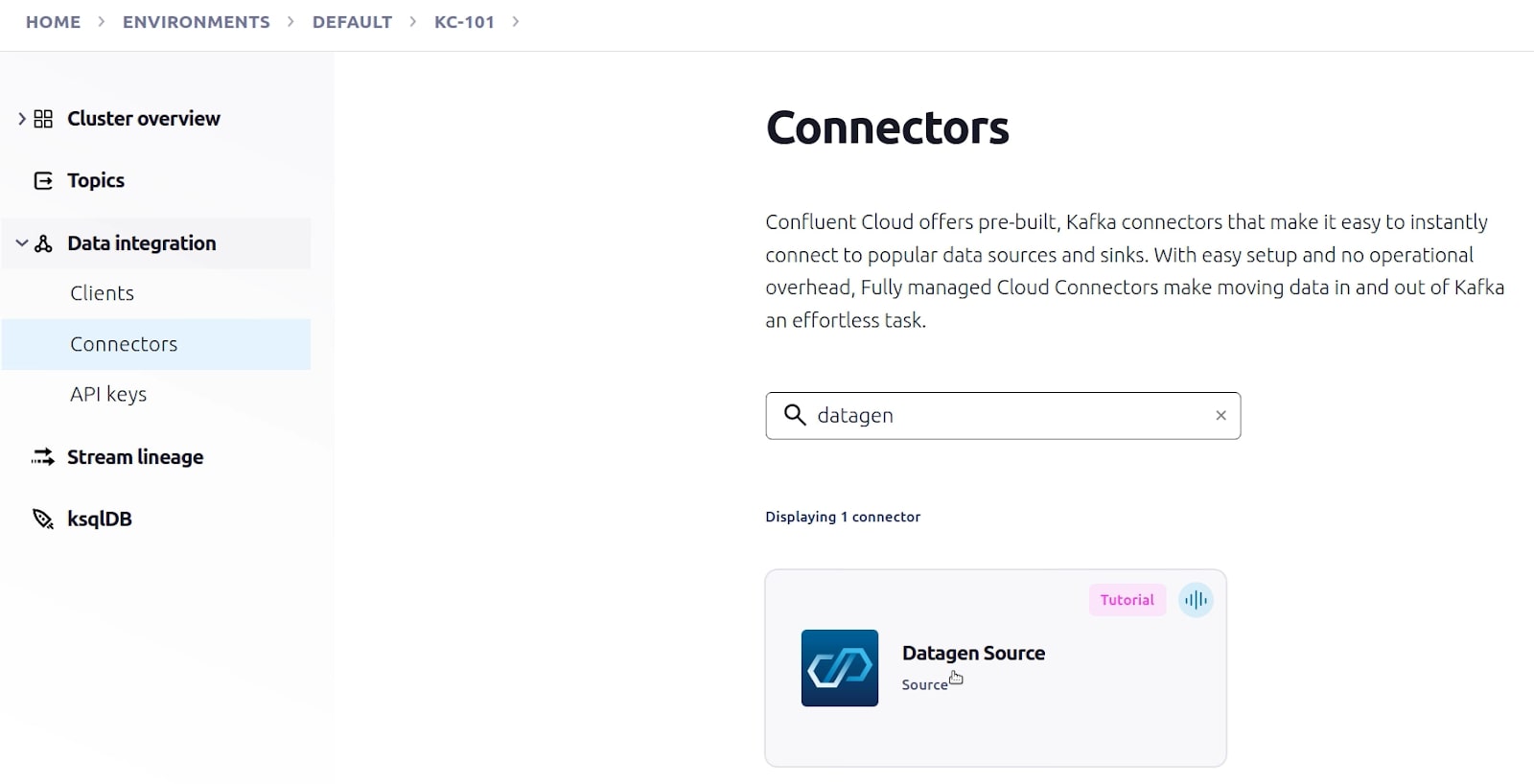

- In Confluent Cloud, go to your cluster’s Connectors page. In the search box, enter datagen.

Select the Datagen Source connector.

Select the Datagen Source connector.

- Under Configuration, click on Orders.

- Under Topic selection, click on Add a new topic.

- Name the topic orders and ensure that the Number of partitions is set to 6.

- Click on Create with defaults.

- Upon returning to Topic selection, click on orders and click Continue.

- Under Kafka credentials, click on Generate Kafka API key & download.

- When the API credentials appear, click Continue.

There is no need to save them as we will not be using them after this exercise.

- On the confirmation screen, the JSON should look like this:

{

"name": "DatagenSourceConnector_0",

"config": {

"connector.class": "DatagenSource",

"name": "DatagenSourceConnector_0",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key": "****************",

"kafka.api.secret": "****************************************************************",

"kafka.topic": "orders",

"output.data.format": "JSON",

"quickstart": "ORDERS",

"tasks.max": "1"

}

}- Click Launch to provision the connector. This will take a few moments. Once the provisioning is complete, the view will automatically switch to show the connector Overview page.

- The Overview page shows the current status of the connector as well as several metrics that reflect its general health.

18. From the Topics page of your cluster, select the orders topic and then Messages. You should see a steady stream of new messages arriving:

18. From the Topics page of your cluster, select the orders topic and then Messages. You should see a steady stream of new messages arriving:

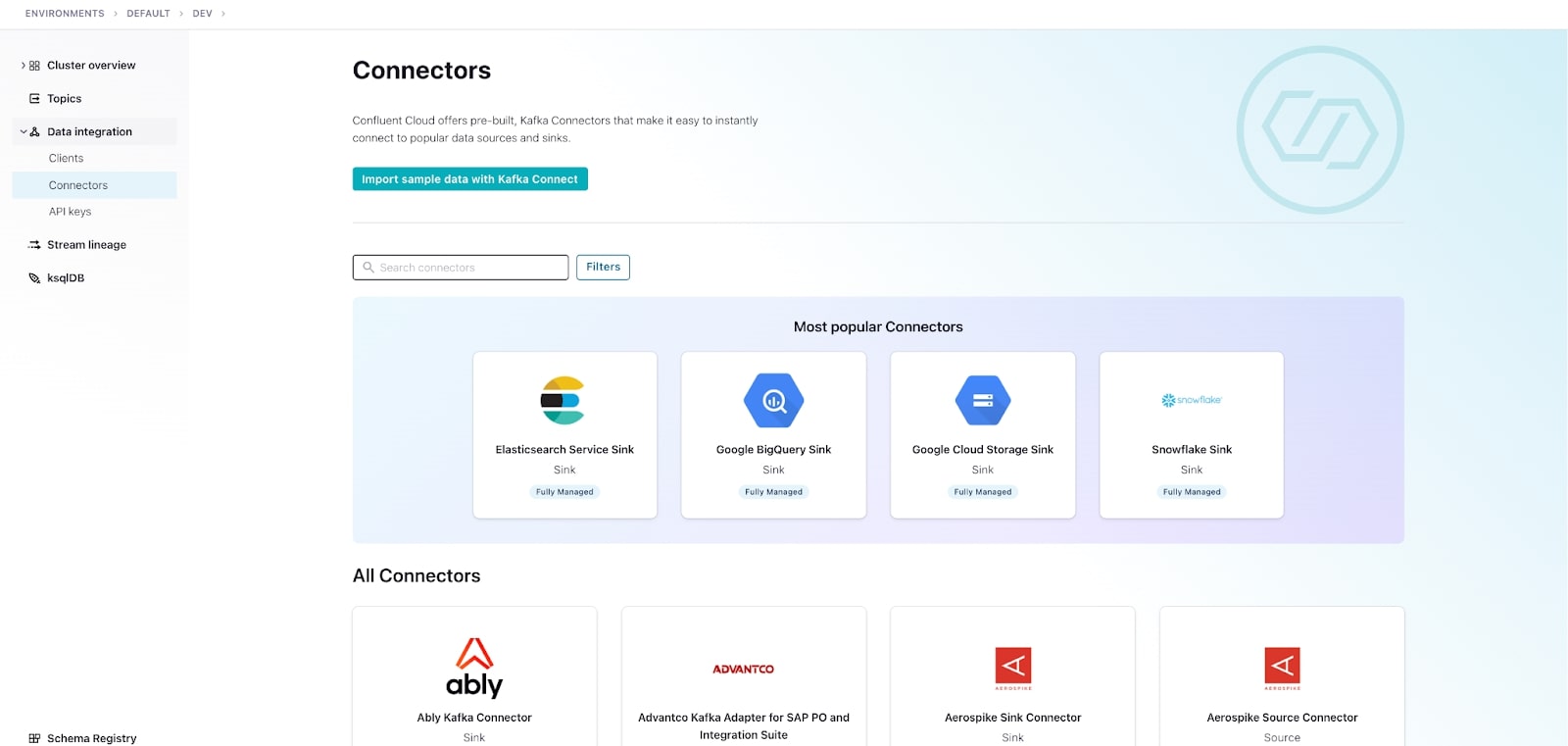

19. Keep in mind that this Datagen Source Connector is only a jumping off point for your Kafka Connect journey. As a final step, head on over to the Connectors page and take a look at the other connectors.

19. Keep in mind that this Datagen Source Connector is only a jumping off point for your Kafka Connect journey. As a final step, head on over to the Connectors page and take a look at the other connectors.

Use the promo code 101CONNECT & CONFLUENTDEV1 to get $25 of free Confluent Cloud usage and skip credit card entry.

Hands On: Getting Started with Kafka Connect

This exercise will walk you through the process of creating your very first fully-managed Kafka Connector in Confluent Cloud. Let's get started. To kick us off, you'll need an account for Confluent Cloud. If you don't already have one, go back to the first module in this course to see instructions on how to sign up. Once your account is set up and ready to go, create and launch a basic cluster called KC-101. It may take a few minutes for your cluster to be provisioned. We'll use this cluster for all of the exercises throughout this course. In this exercise, we're creating a source connector to generate data to Kafka. Now usually you'll have an external data source that Kafka Connect will pull data from to produce that data to a Kafka topic. We don't have a data source to use at the moment, so we'll be creating a Datagen connector which will generate sample data for us. To do this, we'll navigate into Data Integration and select Connectors. There are many fully-managed connectors available in Confluent Cloud, so we'll use the search option to locate the Datagen connector and select it. The first step for this connector is to select what type of sample data we want it to produce. You can see that there are a host of data options available to you, but for this exercise, we'll be writing orders data, so let's select that. Next, we need to select a topic that our connector will write the orders data to. We haven't created any topics yet for this cluster, so we'll use the available option to add a topic now. Let's call our topic orders, as that's the test data we'll be creating. At the time of this recording, the default number of partitions is six, so we can leave it at that. Now that the topic is available we can select it and continue. Now in order for our connector to communicate with our cluster, we need to provide an API key for it to use. You can use an existing API key and secret or create one here as we are doing. For sizing, we'll use the default value of one for the number of tasks. And, finally, let's confirm the connector configuration and launch it. It can take a few minutes to provision the connector fully. Once it's up and running, we can select the connector name to see more details. Alternatively, you can head on over to the Topics tab, select that orders topic, and verify that messages are being written. As you can see here, the data is being written and formatted perfectly fine. So there you have it. You've created your first source connector in Confluent Cloud. This is just the tip of the iceberg though. If you head on back to the Connectors tab, you'll see a number of fully-managed connectors available. Following a similar workflow to today's exercise, you can spin up a connector and integrate external systems in just a few minutes. That said, when you're finished running this exercise on your own, you'll want to delete the Datagen connector, preventing it from unnecessarily accruing cost and exhausting your promotional credits. Let's walk through the process together. First, we'll navigate back to the Datagen connector. Once there, we can go ahead and delete it. We'll leave the orders topic and KC-101 cluster, since it will be used during exercises that follow in this course. See you then.

Be the first to get updates and new content

We will only share developer content and updates, including notifications when new content is added. We will never send you sales emails. 🙂 By subscribing, you understand we will process your personal information in accordance with our Privacy Statement.