How can you rekey records in a Kafka topic, making the key a variation of data currently in the payload?

This tutorial installs Confluent Platform using Docker. Before proceeding:

• Install Docker Desktop (version 4.0.0 or later) or Docker Engine (version 19.03.0 or later) if you don’t already have it

• Install the Docker Compose plugin if you don’t already have it. This isn’t necessary if you have Docker Desktop since it includes Docker Compose.

• Start Docker if it’s not already running, either by starting Docker Desktop or, if you manage Docker Engine with systemd, via systemctl

• Verify that Docker is set up properly by ensuring no errors are output when you run docker info and docker compose version on the command line

ksqlDB has many built-in functions that help with processing records in streaming data, like ABS and SUM. Extracting the area code from a phone number is easiest done with a regular expression. To do this you can implement custom functions in Java that go beyond the built-in functions.

Get started by making a new directory anywhere you’d like for this project:

mkdir rekey-with-function && cd rekey-with-function

Then make the following directories:

mkdir src extensionsYou can create a Gradle build file to build your Java code into a jar file that is supplied to KSQL. Create the following Gradle build file, named build.gradle for the project:

buildscript {

repositories {

mavenCentral()

}

}

plugins {

id 'java'

}

// Use Java 11 since CP Docker images package Java 11 as of CP 7.3.x

sourceCompatibility = JavaVersion.VERSION_11

targetCompatibility = JavaVersion.VERSION_11

version = '0.0.1'

repositories {

mavenCentral()

maven {

url 'https://packages.confluent.io/maven'

}

}

dependencies {

implementation 'io.confluent.ksql:ksql-udf:5.4.0'

testImplementation 'junit:junit:4.12'

}

task copyJar(type: Copy) {

from jar

into "extensions/"

}

build.dependsOn copyJar

test {

testLogging {

outputs.upToDateWhen { false }

showStandardStreams = true

exceptionFormat = 'full'

}

}

The build.gradle also contains a copyJar step to copy the jar file to the extensions/ directory where it will be picked up by KSQL. This is convenient when you are iterating on a function. For example, you might have tested your UDF against your suite of unit tests and you are now ready to test against steams in KSQL. With the jar in the correct place a restart of ksqlDB will load your updated jar.

And be sure to run the following command to obtain the Gradle wrapper:

gradle wrapper

Create a directory for the Java files in this project:

mkdir -p src/main/java/io/confluent/developer

Then create the following file at src/main/java/io/confluent/developer/RegexReplace.java. This file contains the Java logic of your custom function. Read through the code to familiarize yourself. You will see that the code is checking for null values in each of the parameters. We do this because, the custom function could be used with unpopulated data that will send a null to the input parameter. As as extra sanity we check the regex and replacement parameters are not sent null.

package io.confluent.developer;

import io.confluent.ksql.function.udf.Udf;

import io.confluent.ksql.function.udf.UdfDescription;

import io.confluent.ksql.function.udf.UdfParameter;

@UdfDescription(name = "regexReplace", description = "Replace string using a regex")

public class RegexReplace {

@Udf(description = "regexReplace string using a regex")

public String regexReplace(

@UdfParameter(value = "input", description = "If null, then function returns null.") final String input,

@UdfParameter(value = "regex", description = "If null, then function returns null.") final String regex,

@UdfParameter(value = "replacement", description = "If null, then function returns null.") final String replacement) {

if (input == null || regex == null || replacement == null) {

return null;

}

return input.replaceAll(regex, replacement);

}

}See more about ksqlDB User-Defined Functions at the KSQL Custom Function Reference.

In your terminal, run:

./gradlew build

The copyJar gradle task will automatically deliver the jar to the extensions/ directory.

Next, create the following docker-compose.yml file to obtain Confluent Platform (for Kafka in the cloud, see Confluent Cloud):

version: '2'

services:

broker:

image: confluentinc/cp-kafka:7.4.1

hostname: broker

container_name: broker

ports:

- 29092:29092

environment:

KAFKA_BROKER_ID: 1

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT,CONTROLLER:PLAINTEXT

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://broker:9092,PLAINTEXT_HOST://localhost:29092

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

KAFKA_GROUP_INITIAL_REBALANCE_DELAY_MS: 0

KAFKA_TRANSACTION_STATE_LOG_MIN_ISR: 1

KAFKA_TRANSACTION_STATE_LOG_REPLICATION_FACTOR: 1

KAFKA_PROCESS_ROLES: broker,controller

KAFKA_NODE_ID: 1

KAFKA_CONTROLLER_QUORUM_VOTERS: 1@broker:29093

KAFKA_LISTENERS: PLAINTEXT://broker:9092,CONTROLLER://broker:29093,PLAINTEXT_HOST://0.0.0.0:29092

KAFKA_INTER_BROKER_LISTENER_NAME: PLAINTEXT

KAFKA_CONTROLLER_LISTENER_NAMES: CONTROLLER

KAFKA_LOG_DIRS: /tmp/kraft-combined-logs

CLUSTER_ID: MkU3OEVBNTcwNTJENDM2Qk

schema-registry:

image: confluentinc/cp-schema-registry:7.3.0

hostname: schema-registry

container_name: schema-registry

depends_on:

- broker

ports:

- 8081:8081

environment:

SCHEMA_REGISTRY_HOST_NAME: schema-registry

SCHEMA_REGISTRY_KAFKASTORE_BOOTSTRAP_SERVERS: broker:9092

ksqldb-server:

image: confluentinc/ksqldb-server:0.28.2

hostname: ksqldb-server

container_name: ksqldb-server

depends_on:

- broker

- schema-registry

volumes:

- ./extensions:/etc/ksqldb/ext

ports:

- 8088:8088

environment:

KSQL_CONFIG_DIR: /etc/ksqldb

KSQL_KSQL_EXTENSION_DIR: /etc/ksqldb/ext/

KSQL_LOG4J_OPTS: -Dlog4j.configuration=file:/etc/ksqldb/log4j.properties

KSQL_BOOTSTRAP_SERVERS: broker:9092

KSQL_HOST_NAME: ksqldb-server

KSQL_LISTENERS: http://0.0.0.0:8088

KSQL_CACHE_MAX_BYTES_BUFFERING: 0

KSQL_KSQL_SCHEMA_REGISTRY_URL: http://schema-registry:8081

ksqldb-cli:

image: confluentinc/ksqldb-cli:0.28.2

container_name: ksqldb-cli

depends_on:

- broker

- ksqldb-server

entrypoint: /bin/sh

tty: true

environment:

KSQL_CONFIG_DIR: /etc/ksqldb

volumes:

- ./src:/opt/app/src

- ./test:/opt/app/test

Note docker-compose.yml has configured the ksqldb-server container with KSQL_KSQL_EXTENSION_DIR: "/etc/ksql/ext/", and maps the local extensions directory to /etc/ksql/ext in the container. ksqlDB is now configured to look in this location for your extensions such as custom functions.

Launch the platform by running:

docker compose up -d

To begin developing interactively, open up the ksqlDB CLI:

docker exec -it ksqldb-cli ksql http://ksqldb-server:8088

Firstly, let’s confirm the UDF jar has been loaded correctly. You will see REGEXREPLACE in the list of functions.

SHOW FUNCTIONS;You can see some addition detail about the function with DESCRIBE FUNCTION.

DESCRIBE FUNCTION REGEXREPLACE;The result gives you a description of the function including input parameters and the return type.

Name : REGEXREPLACE

Overview : Replace string using a regex

Type : SCALAR

Jar : /etc/ksqldb/ext/rekey-with-function-0.0.1.jar

Variations :

Variation : REGEXREPLACE(input VARCHAR, regex VARCHAR, replacement VARCHAR)

Returns : VARCHAR

Description : regexReplace string using a regex

input : If null, then function returns null.

regex : If null, then function returns null.

replacement : If null, then function returns null.

Create a Kafka topic and stream of customers.

CREATE STREAM customers (id int key, firstname string, lastname string, phonenumber string)

WITH (kafka_topic='customers',

partitions=2,

value_format = 'avro');

Then insert the customer data.

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (1, 'Sleve', 'McDichael', '(360) 555-8909');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (2, 'Onson', 'Sweemey', '206-555-1272');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (3, 'Darryl', 'Archideld', '425.555.6940');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (4, 'Anatoli', 'Smorin', '509.555.8033');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (5, 'Rey', 'McSriff', '360 555 6952');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (6, 'Glenallen', 'Mixon', '(253) 555-7050');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (7, 'Mario', 'McRlwain', '360 555 7598');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (8, 'Kevin', 'Nogilny', '206.555.8090');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (9, 'Tony', 'Smehrik', '425-555-7926');

INSERT INTO customers (id, firstname, lastname, phonenumber) VALUES (10, 'Bobson', 'Dugnutt', '509.555.8795');

Now that you have stream with some events in it, let’s read them out. The first thing to do is set the following properties to ensure that you’re reading from the beginning of the stream:

SET 'auto.offset.reset' = 'earliest';

We can view the results of the newly created REGEXREPLACE with the messages:

SELECT ID, FIRSTNAME, LASTNAME, PHONENUMBER, REGEXREPLACE(phonenumber, '\\(?(\\d{3}).*', '$1') as area_code

FROM CUSTOMERS

EMIT CHANGES

LIMIT 10;

This should yield roughly the following output. The order will be different as we are delivering to 2 partitions:

+--------------------+--------------------+--------------------+--------------------+--------------------+

|ID |FIRSTNAME |LASTNAME |PHONENUMBER |AREA_CODE |

+--------------------+--------------------+--------------------+--------------------+--------------------+

|2 |Onson |Sweemey |206-555-1272 |206 |

|1 |Sleve |McDichael |(360) 555-8909 |360 |

|5 |Rey |McSriff |360 555 6952 |360 |

|8 |Kevin |Nogilny |206.555.8090 |206 |

|9 |Tony |Smehrik |425-555-7926 |425 |

|3 |Darryl |Archideld |425.555.6940 |425 |

|4 |Anatoli |Smorin |509.555.8033 |509 |

|6 |Glenallen |Mixon |(253) 555-7050 |253 |

|7 |Mario |McRlwain |360 555 7598 |360 |

|10 |Bobson |Dugnutt |509.555.8795 |509 |

Limit Reached

Query terminated

Note REGEXREPLACE has extracted the area code from several differently formatted phone numbers.

Now, using ksqlDB’s appropriately-named PARTITION BY clause, we can apply the results of REGEXREPLACE as the key for each message and write the result to a new stream.

As we’re not partitioning by an existing column, ksqlDB will add a new column to the customers_by_area_code stream based on the result of our function call.

We provide the name AREA_CODE for this new column in the projection.

CREATE STREAM customers_by_area_code

WITH (KAFKA_TOPIC='customers_by_area_code') AS

SELECT

REGEXREPLACE(phonenumber, '\\(?(\\d{3}).*', '$1') AS AREA_CODE,

id,

firstname,

lastname,

phonenumber

FROM customers

PARTITION BY REGEXREPLACE(phonenumber, '\\(?(\\d{3}).*', '$1')

EMIT CHANGES;

Now, we can verify it’s working:

SELECT AREA_CODE, FIRSTNAME, LASTNAME, PHONENUMBER

FROM CUSTOMERS_BY_AREA_CODE

EMIT CHANGES

LIMIT 10;

This should yield roughly the following output. The order might vary from what you see here, but the data has been repartitioned such that all customers in the same area code are now in exactly one partition.

+--------------------+--------------------+--------------------+--------------------+

|AREA_CODE |FIRSTNAME |LASTNAME |PHONENUMBER |

+--------------------+--------------------+--------------------+--------------------+

|360 |Sleve |McDichael |(360) 555-8909 |

|206 |Onson |Sweemey |206-555-1272 |

|360 |Rey |McSriff |360 555 6952 |

|425 |Darryl |Archideld |425.555.6940 |

|360 |Mario |McRlwain |360 555 7598 |

|206 |Kevin |Nogilny |206.555.8090 |

|509 |Anatoli |Smorin |509.555.8033 |

|425 |Tony |Smehrik |425-555-7926 |

|253 |Glenallen |Mixon |(253) 555-7050 |

|509 |Bobson |Dugnutt |509.555.8795 |

Limit Reached

Query terminated

Now that you have a series of statements that’s doing the right thing, the last step is to put them into a file so that they can be used outside the CLI session. Create a file at src/statements.sql with the following content:

CREATE STREAM customers (id int key, firstname string, lastname string, phonenumber string)

WITH (kafka_topic='customers',

partitions=2,

value_format = 'avro');

CREATE STREAM customers_by_area_code

WITH (KAFKA_TOPIC='customers_by_area_code') AS

SELECT * FROM customers

PARTITION BY REGEXREPLACE(phonenumber, '\\(?(\\d{3}).*', '$1')

EMIT CHANGES;

Then, create a directory for the tests to live in:

mkdir -p src/test/java/io/confluent/developer

Create the following test file at src/test/java/io/confluent/developer/RegexReplaceTest.java:

package io.confluent.developer;

import static org.junit.Assert.*;

import io.confluent.developer.RegexReplace;

import org.junit.Test;

public class RegexReplaceTest {

@Test

public void testRegexReplace() {

RegexReplace udf = new RegexReplace();

String regEx = "\\(?(\\d{3}).*";

assertEquals("206", udf.regexReplace("206-555-1272", regEx, "$1"));

assertEquals("425", udf.regexReplace("425.555.6940", regEx, "$1"));

assertEquals("360", udf.regexReplace("360 555 6952", regEx, "$1"));

assertEquals("253", udf.regexReplace("(253) 555-7050", regEx, "$1"));

assertEquals("425", udf.regexReplace("425-555-7926", regEx, "$1"));

// test null parameters return null

assertNull(udf.regexReplace(null, regEx, "$1"));

assertNull(udf.regexReplace("425-555-7926", null, "$1"));

assertNull(udf.regexReplace("425-555-7926", regEx, null));

}

}

Now run the test, which is as simple as:

./gradlew test

Instead of running a local Kafka cluster, you may use Confluent Cloud, a fully managed Apache Kafka service.

Sign up for Confluent Cloud, a fully managed Apache Kafka service.

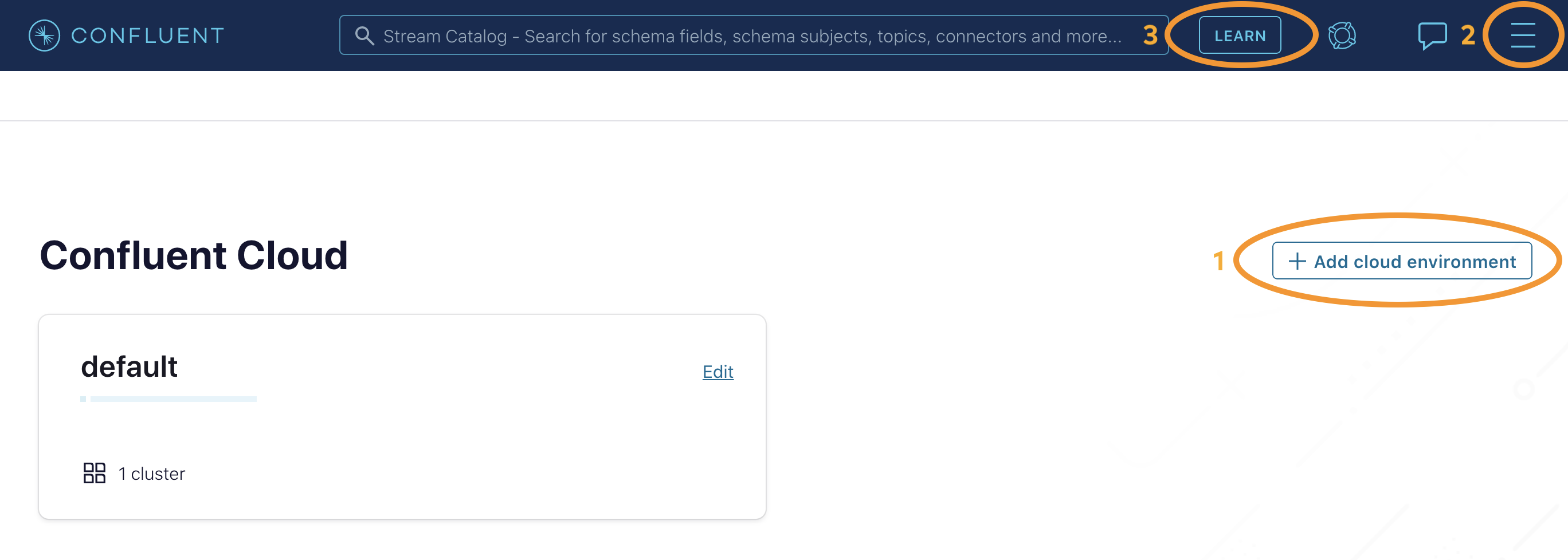

After you log in to Confluent Cloud Console, click Environments in the lefthand navigation, click on Add cloud environment, and name the environment learn-kafka. Using a new environment keeps your learning resources separate from your other Confluent Cloud resources.

From the Billing & payment section in the menu, apply the promo code CC100KTS to receive an additional $100 free usage on Confluent Cloud (details). To avoid having to enter a credit card, add an additional promo code CONFLUENTDEV1. With this promo code, you will not have to enter a credit card for 30 days or until your credits run out.

Click on LEARN and follow the instructions to launch a Kafka cluster and enable Schema Registry.

Next, from the Confluent Cloud Console, click on Clients to get the cluster-specific configurations, e.g., Kafka cluster bootstrap servers and credentials, Confluent Cloud Schema Registry and credentials, etc., and set the appropriate parameters in your client application.

Now you’re all set to run your streaming application locally, backed by a Kafka cluster fully managed by Confluent Cloud.